This post was originally published August 21, 2024 but has been revised with current data.

Recently, NVIDIA and Mistral AI unveiled Mistral NeMo 12B, a leading state-of-the-art large language model (LLM). Mistral NeMo 12B consistently outperforms similarly sized models on a wide range of benchmarks.

We announced Mistral-NeMo-Minitron 8B, one of the most advanced open-access models in its size class. This model consistently delivers leading accuracy on nine popular benchmarks. The Mistral-NeMo-Minitron 8B base model was obtained by width-pruning the Mistral NeMo 12B base model, followed by a light retraining process using knowledge distillation. This is a successful recipe that NVIDIA originally proposed in the paper, Compact Language Models via Pruning and Knowledge Distillation. It’s been proven time and again with NVIDIA Minitron 8B and 4B, and Llama-3.1-Minitron 4B models.

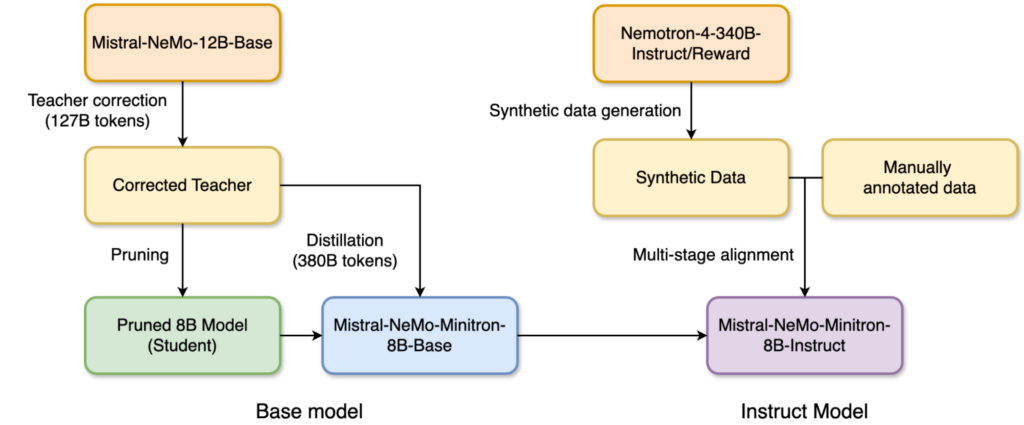

In Figure 1, the Nemotron-4-340B-Instruct and -Reward models were used to generate synthetic data for the alignment.

| MMLU 5-shot | GMS8k 0-shot | GPQA 0-shot | HumanEval0-shot | MBPP 0-shot | IFEval | MTBench (GPT4-Turbo) | BFCL v2 Live | ||

| Mistral-NeMo-Minitron 8B Instruct | 70.4 | 87.1 | 31.5 | 71.3 | 72.5 | 84.4 | 7.86 | 67.6 | |

| Llama-3.1-8B-Instruct | 69.4 | 83.9 | 30.4 | 72.6 | 72.8 | 79.7 | 7.78 | 44.3 | |

| Mistral-NeMo-12B-Instruct | 68.4 | 79.8 | 28.6 | 68.3 | 66.7 | 64.7 | 8.10 | 47.9 | |

| Training tokens | Wino-Grande 5-shot | ARC Challenge 25-shot |

MMLU 5-shot | Hella Swag 10-shot |

GSM8K 5-shot | TruthfulQA 0-shot | XLSum en (20%) 3-shot |

MBPP 0-shot |

Human Eval 0-shot |

||

| Llama-3.1-8B | 15T | 77.27 | 57.94 | 65.28 | 81.80 | 48.60 | 45.06 | 30.05 | 42.27 | 24.76 | |

| Gemma-7B | 6T | 78 | 61 | 64 | 82 | 50 | 45 | 17 | 39 | 32 | |

| Mistral-NeMo-Minitron-8B | 380B | 80.35 | 64.42 | 69.51 | 83.03 | 58.45 | 47.56 | 31.94 | 43.77 | 36.22 | |

| Mistral-NeMo-12B | N/A | 82.24 | 65.10 | 68.99 | 85.16 | 56.41 | 49.79 | 33.43 | 42.63 | 23.78 | |

Overview of model pruning and distillation

Model pruning is the process of making a model smaller and leaner, either by dropping layers (depth pruning) or dropping neurons and attention heads and embedding channels (width pruning). Pruning is often accompanied by some amount of retraining for accuracy recovery.

Model distillation is a technique used to transfer knowledge from a large, complex model, often called the teacher model, to a smaller, simpler student model. The goal is to create a more efficient model that retains much of the predictive power of the original, larger model while being faster and less resource-intensive to run. Herein, we employ distillation as a light retraining procedure after pruning, on a dataset much smaller than that used in model training from scratch.

Iterative pruning and distillation is an approach where, starting from a single pretrained model, multiple progressively smaller models can be obtained. For example, a 15B model can be pruned and distilled to obtain an 8B model, which in turn serves as a starting point for pruning and distilling a 4B model, and so on.

The combination of model pruning followed by light retraining through distillation is an effective and cost-efficient approach to train a family of models. For each additional model, just 100-400B tokens are used for retraining—a greater than 40x reduction compared to training from scratch. As such, the compute cost savings to train a family of models (12B, 8B, and 4B) is up to 1.95x compared to training all models from scratch.

The learning from extensive ablation studies has been summarized into 10 best practices for structured weight pruning combined with knowledge distillation. We found that width pruning consistently outperforms depth pruning and, most importantly, pruned and distilled models outperform models trained from scratch in quality.

Mistral-NeMo-Minitron 8B

Following our best practices, we width-pruned the Mistral NeMo 12B model to obtain an 8B target model. This section details the steps and parameters used to obtain the Mistral-NeMo-Minitron 8B base model, as well as its performance.

Teacher fine-tuning

To correct for the distribution shift across the original dataset the model was trained on, we first fine-tuned the unpruned Mistral NeMo 12B model on our dataset using 127B tokens. Experiments showed that, without correcting for the distribution shift, the teacher provides suboptimal guidance on the dataset when being distilled.

Width-only pruning

Given our goal of obtaining the strongest 8B model possible, we proceeded with width-only pruning. We pruned both the embedding (hidden) and MLP intermediate dimensions along the width axis to compress Mistral NeMo 12B. Specifically, we computed importance scores for each attention head, embedding channel, and MLP hidden dimension using the activation-based strategy. Following importance estimation, we:

- Pruned the MLP intermediate dimension from 14336 to 11520

- Pruned the hidden size from 5120 to 4096

- Retained the attention headcount and number of layers

Distillation parameters

We distilled the model with peak learning rate=1e-4, minimum learning rate=4.5e-7, linear warm up of 60 steps, cosine decay schedule, and a global batch size of 768 using 380B tokens (the same dataset used in teacher fine-tuning).

Mistral-NeMo-Minitron-8B-Instruct

We applied an advanced alignment technique consisting of two-stage instruction finetuning and two-stage preference optimization, resulting in a state-of-the-art instruct model with excellent performance in instruction following, language reasoning, function calling, and safety benchmarks.

The alignment data was synthetically generated using the Nemotron-340B-Instruct model in conjunction with the Nemotron-340B-Reward model. The model alignment was done with NVIDIA NeMo Aligner.

Performance benchmarks

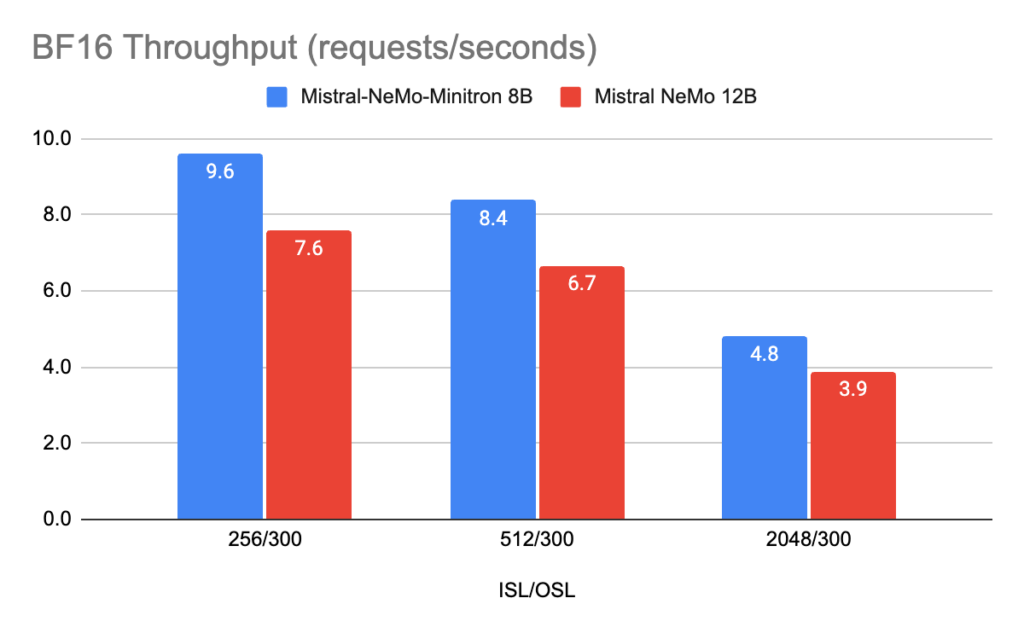

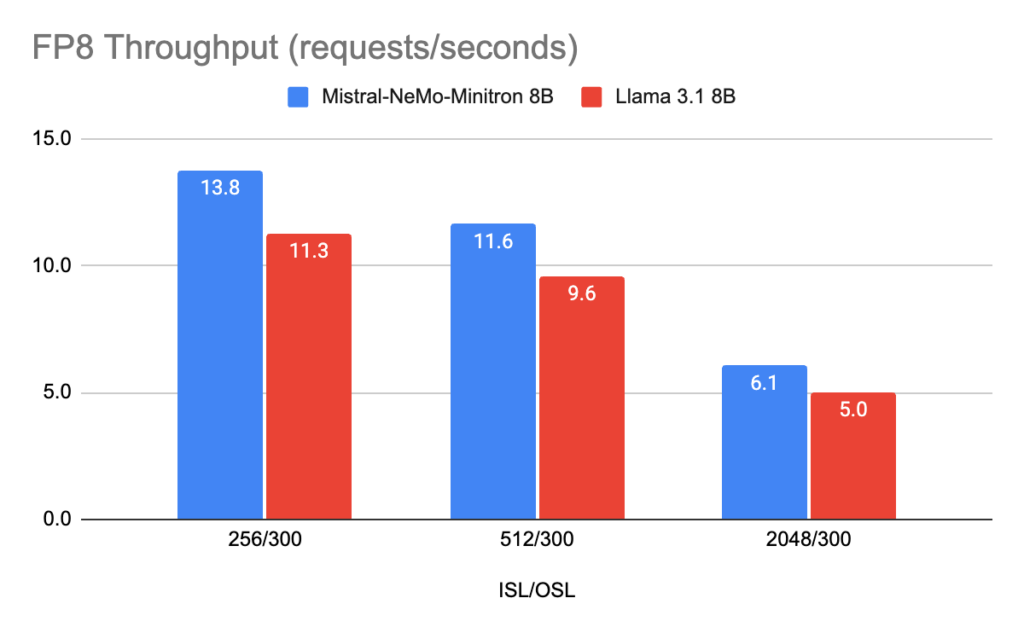

We optimized the Mistral-NeMo-Minitron-8B-Base model, the teacher Mistral-NeMo-12B model, and the LLama-3.1-8B model with NVIDIA TensorRT-LLM, an open-source toolkit for optimized LLM inference.

Figures 2 and 3 show the throughput requests per second of different models in FP8 and BF16 precision on different use cases, represented as input sequence length/output sequence length (ISL/OSL) combinations at batch size 32 on one NVIDIA H100 80-GB GPU.

The Llama-3.1-8B model is the fastest, at an average of ~1.4x throughput of Mistral-NeMo-12B, followed by Mistral-NeMo-Minitron-8B-Base at a 1.2x improvement over Mistral-NeMo-12B. This is primarily because the Llama-3.1-8B model has 32 layers compared to Mistral-NeMo-12B with 40 layers.

Deployment in FP8 also delivers a performance boost of ~1.4x across all three models compared to BF16.

Conclusion

Mistral-NeMo-Minitron-8B provides class-leading accuracy and consistently outperforms recently introduced state-of-the-art models of similar size. Mistral-NeMo-Minitron-8B is our first work on the distillation of the Mistral-NeMo-12B model and provides strong support for our structured weight pruning combined with knowledge distillation best practices.

Mistral-NeMo-Minitron-8B-Instruct also demonstrated our state-of-the-art alignment training recipe. Further work distilling, aligning, and obtaining even smaller and more accurate models is planned. Implementation support for depth pruning and distillation is available in the NVIDIA NeMo framework for generative AI training. Example usage is provided as a notebook.

For more information, see the following resources:

Acknowledgments

This work would not have been possible without contributions from many people at NVIDIA. To mention a few of them:

Foundation model: Sharath Turuvekere Sreenivas, Saurav Muralidharan, Raviraj Joshi, Marcin Chochowski, Sanjeev Satheesh, Jupinder Parmar, Pavlo Molchanov, Mostofa Patwary, Daniel Korzekwa, Ashwath Aithal, Mohammad Shoeybi, Bryan Catanzaro, and Jan Kautz

Alignment: Gerald Shen, Jiaqi Zeng, Ameya Sunil Mahabaleshwarkar, Zijia Chen, Hayley Ross, Brandon Rowlett, Oluwatobi Olabiyi, Shizhe Diao, Yoshi Suhara, Shengyang Sun, Zhilin Wang, Yi Dong, Zihan Liu, Rajarshi Roy, Wei Ping, Makesh Narsimhan Sreedhar, Shaona Ghosh, Somshubra Majumdar, Vahid Noroozi, Aleksander Ficek, Siddhartha Jain, Wasi Uddin Ahmad, Jocelyn Huang, Sean Narenthiran, Igor Gitman, Shubham Toshniwal, Ivan Moshkov, Evelina Bakhturina, Matvei Novikov, Fei Jia, Boris Ginsburg, and Oleksii Kuchaiev

TensorRT-LLM: Bobby Chen, James Shen, and Chenhan Yu

Hugging Face support: Ao Tang, Yoshi Suhara, and Greg Heinrich